The camera included in Google’s Android mobile OS has a feature called “Photo Spheres” that allows you to take a series of photos and create a full spherical panorama. The Photo Sphere feature is included on Google Play Edition (GPE) phones –phones that incorporate Google’s version of unadulterated Android– including my Nexus 5. When you take a Photo Sphere, the camera seamlessly stitches the individual photos into an Equirectangular panorama. For example, here is a panorama I took of the rapeseed fields in central Germany:

There is a bit of distortion (see Tissot’s indicatrix), especially at the top and bottom of the image, but this is due to the problem of projecting a sphere onto a plane. On the Nexus 5 (and other GPE phones), the Gallery application includes a feature that allows you to either view the resulting Photo Spheres as spherical panoramas or to create “Little Planets”/”Tiny Planets”, which are actually Stereographic Projections of the spherical panorama. I found the effect really neat, so I wanted to see if I could recreate the projection in MATLAB.

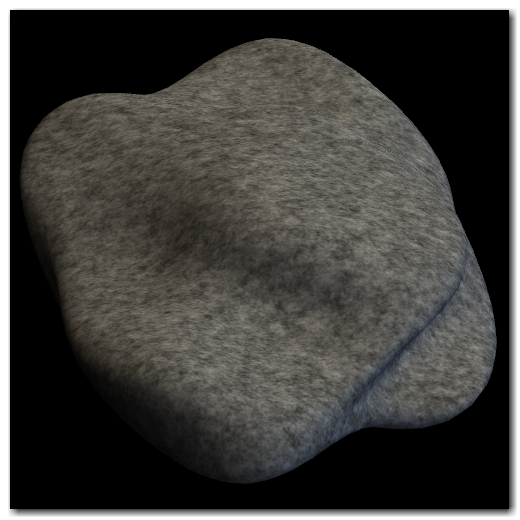

As a teaser, here’s the output for my code:

Click through to get more information on the MATLAB implementation.

Read More